How to Read Shap Plots in Binary Output

Hi there! During the offset meetup of argentinaR.org -an R user grouping- Daniel Quelali introduced us to a new model validation technique called SHAP values.

This novel arroyo allows united states of america to dig a little scrap more in the complexity of the predictive model results, while it allows us to explore the relationships between variables for predicted case.

I've been using this it with "real" data, cross-validating the results, and let me tell y'all it works.

This mail is a gentle introduction to it, hope you savor it!

Notice me on Twitter and Linkedin.

Clone this github repository to reproduce the plots.

Introduction

Complex predictive models are not easy to interpret. By complex I hateful: random woods, xgboost, deep learning, etc.

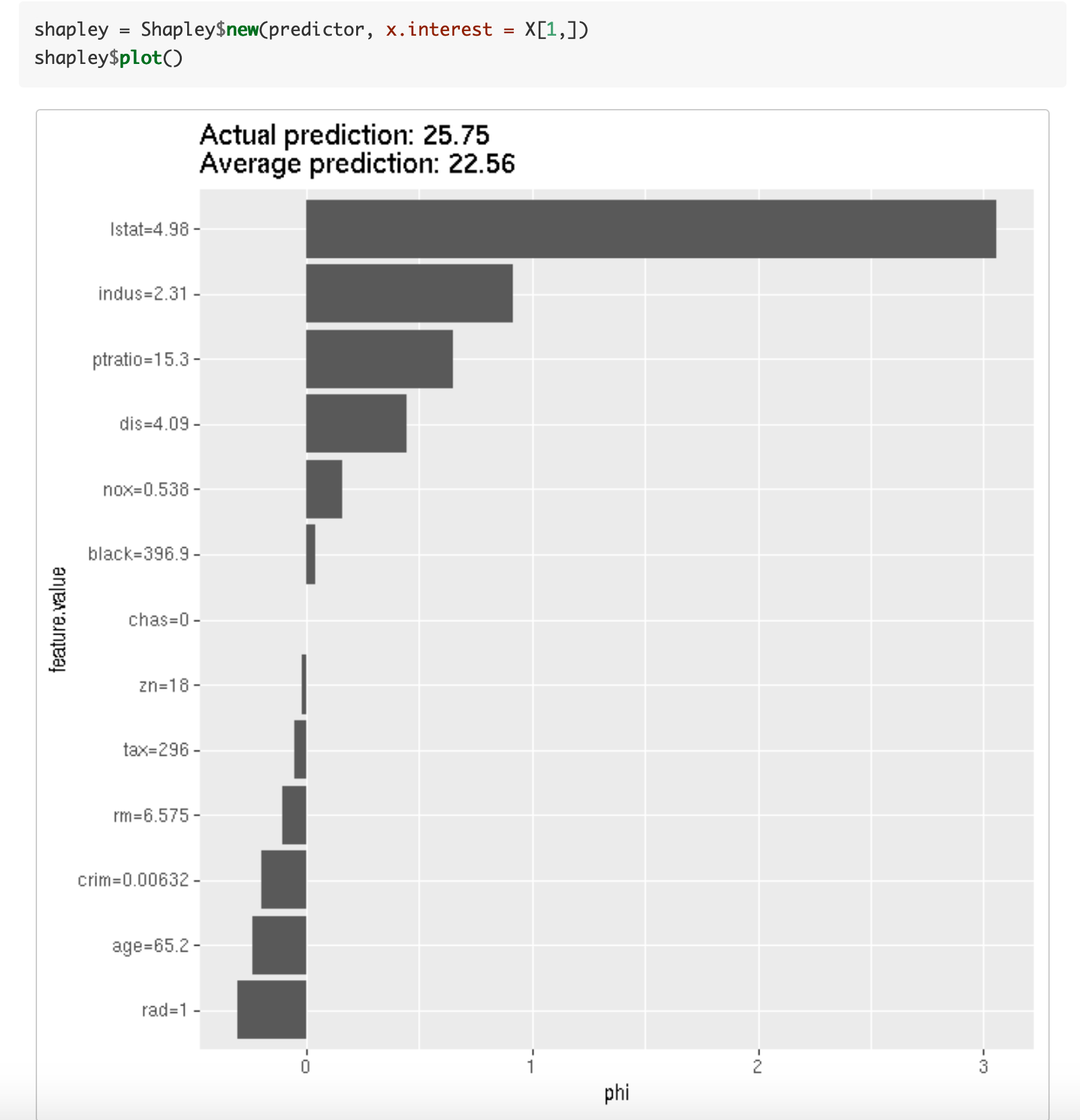

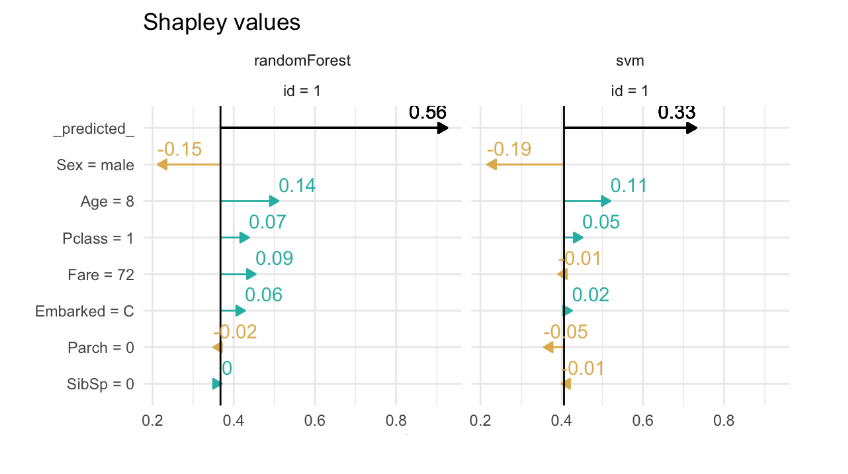

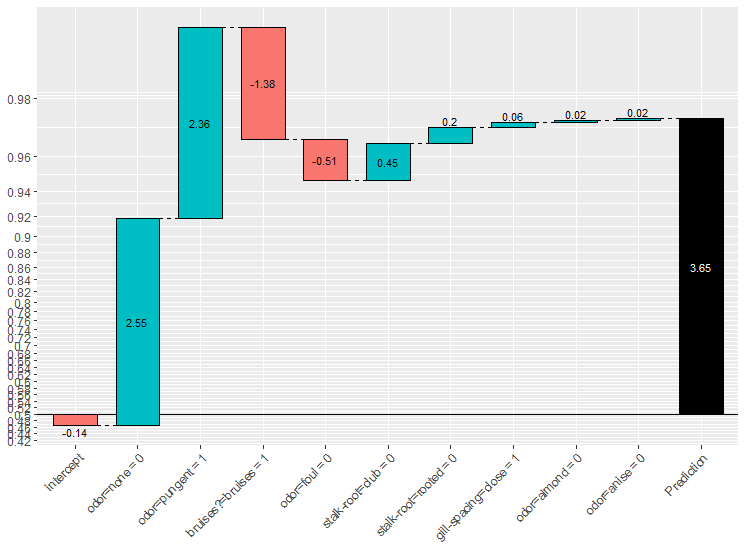

In other words, given a sure prediction, like having a likelihood of buying= 90%, what was the influence of each input variable in order to go that score?

A recent technique to interpret black-box models has stood out amongst others: SHAP (SHapley Additive exPlanations) developed by Scott M. Lundberg.

Imagine a sales score model. A customer living in goose egg lawmaking "A1" with "10 purchases" arrives and its score is 95%, while other from zip code "A2" and "7 purchases" has a score of 60%.

Each variable had its contribution to the concluding score. Maybe a slight modify in the number of purchases changes the score a lot, while irresolute the zip lawmaking simply contributes a tiny corporeality on that specific customer.

SHAP measures the bear on of variables taking into account the interaction with other variables.

Shapley values summate the importance of a feature by comparing what a model predicts with and without the feature. However, since the lodge in which a model sees features tin affect its predictions, this is washed in every possible club, so that the features are adequately compared.

Source

SHAP values in data

If the original data has 200 rows and 10 variables, the shap value table will have the same dimension (200 x x).

The original values from the input information are replaced by its SHAP values. However it is non the same replacement for all the columns. Maybe a value of 10 purchases is replaced by the value 0.3 in customer 1, but in customer 2 it is replaced by 0.6. This alter is due to how the variable for that customer interacts with other variables. Variables work in groups and describe a whole.

Shap values can be obtained by doing:

shap_values=predict(xgboost_model, input_data, predcontrib = TRUE, approxcontrib = F)

Example in R

After creating an xgboost model, we tin plot the shap summary for a rental bike dataset. The target variable is the count of rents for that particular 24-hour interval.

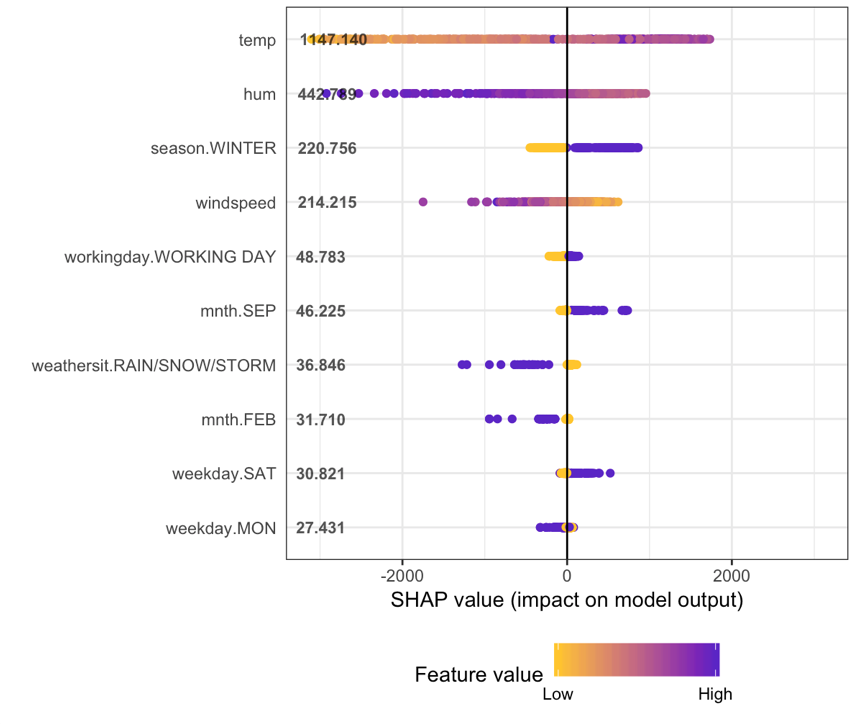

Function plot.shap.summary (from the github repo) gives united states:

How to translate the shap summary plot?

- The y-axis indicates the variable name, in order of importance from tiptop to lesser. The value next to them is the mean SHAP value.

- On the x-axis is the SHAP value. Indicates how much is the change in log-odds. From this number we tin extract the probability of success.

- Gradient color indicates the original value for that variable. In booleans, it volition accept two colors, simply in number it can contain the whole spectrum.

- Each bespeak represents a row from the original dataset.

Going dorsum to the bike dataset, most of the variables are boolean.

We can see that having a loftier humidity is associated with loftier and negative values on the target. Where high comes from the color and negative from the ten value.

In other words, people hire fewer bikes if humidity is high.

When season.WINTER is high (or true) so shap value is high. People rent more bikes in winter, this is nice since information technology sounds counter-intuitive. Note the indicate dispersion in flavour.WINTER is less than in hum.

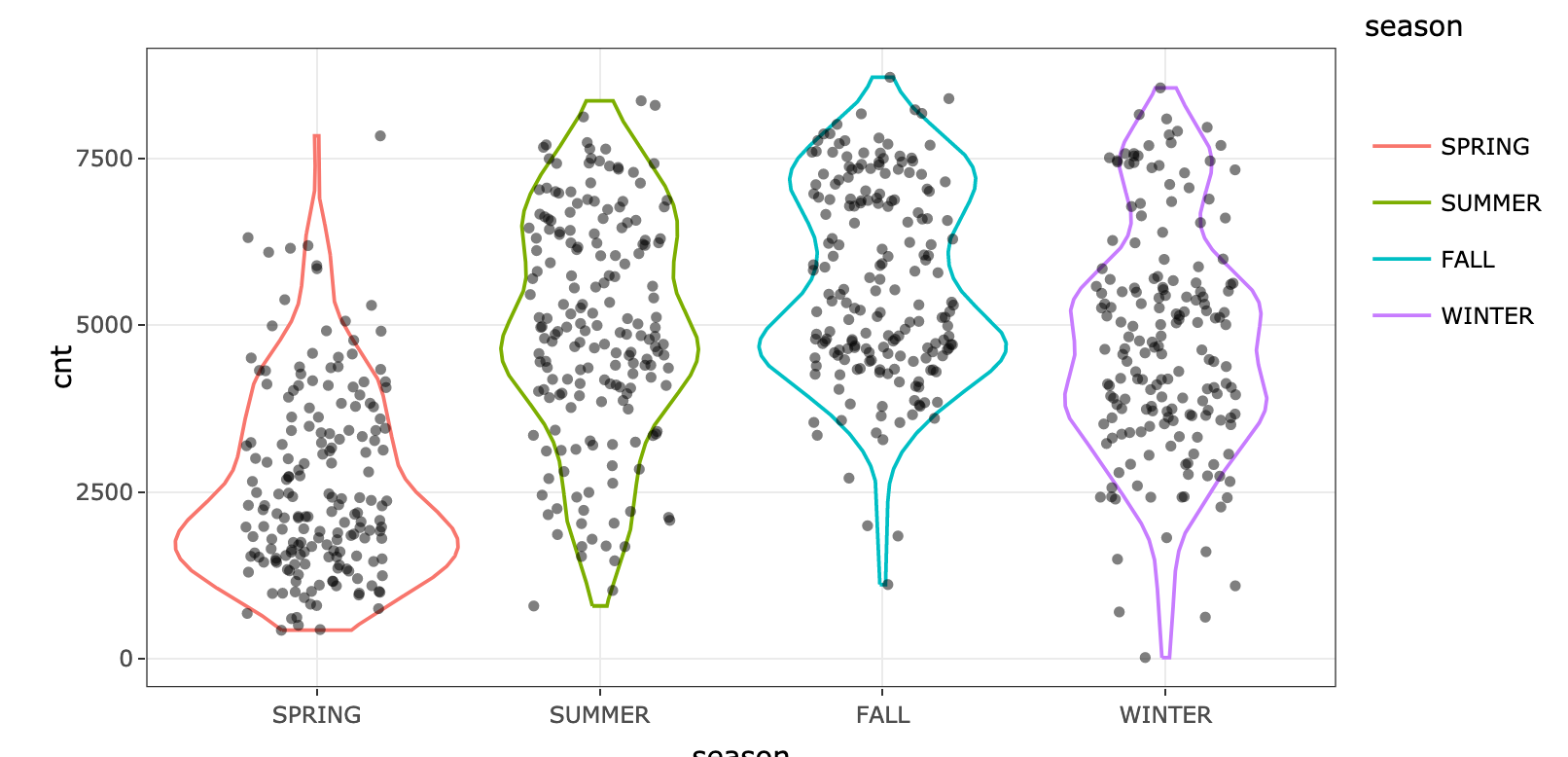

Doing a simple violin plot for variable season confirms the pattern:

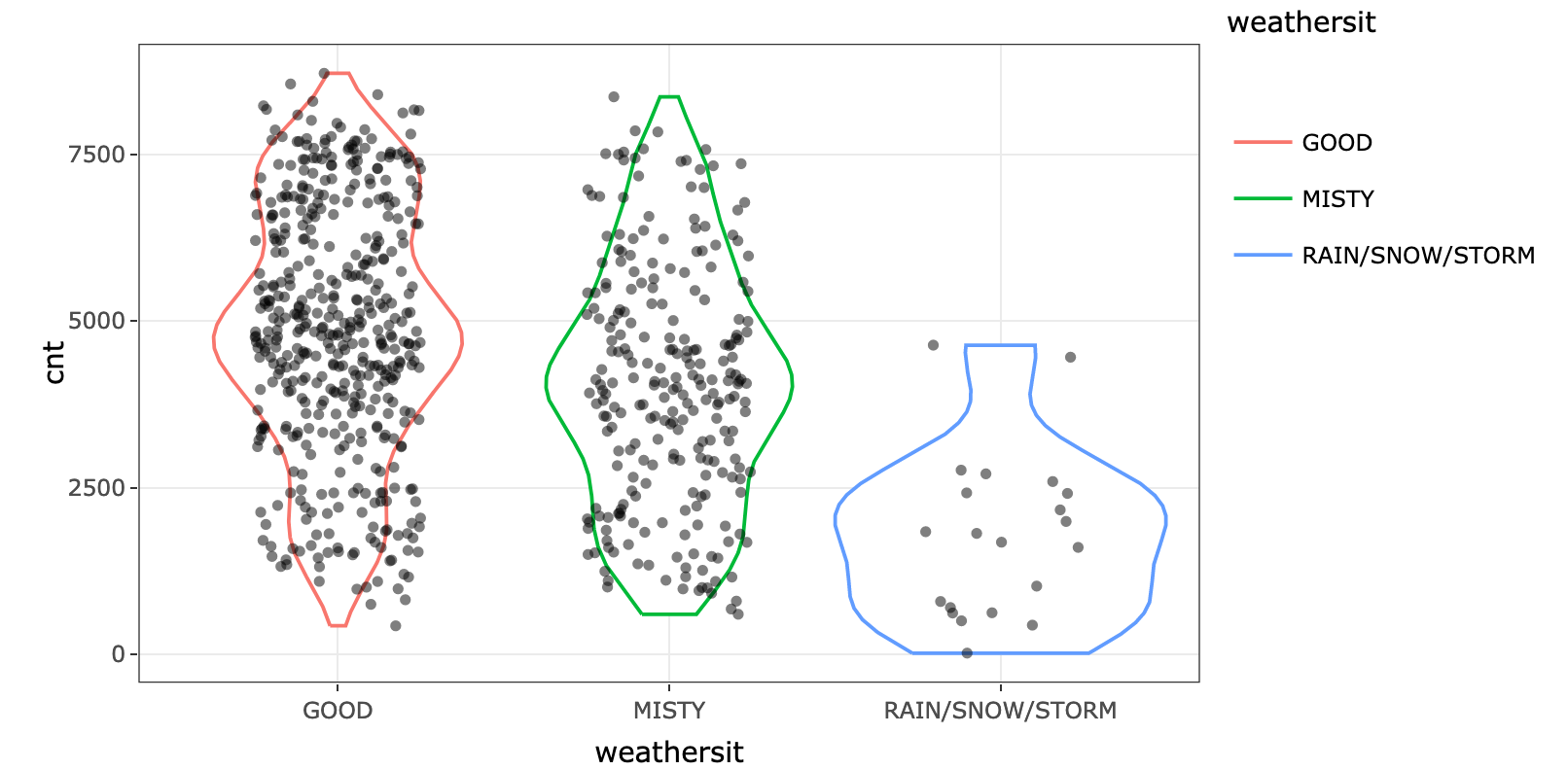

Equally expected, rainy, snowy or stormy days are associated with less renting. However, if the value is 0, it doesn't impact much the bike renting. Look at the yellow points around the 0 value. We can cheque the original variable and see the departure:

What decision can you draw by looking at variables weekday.SAT and weekday.Monday?

Shap summary from xgboost parcel

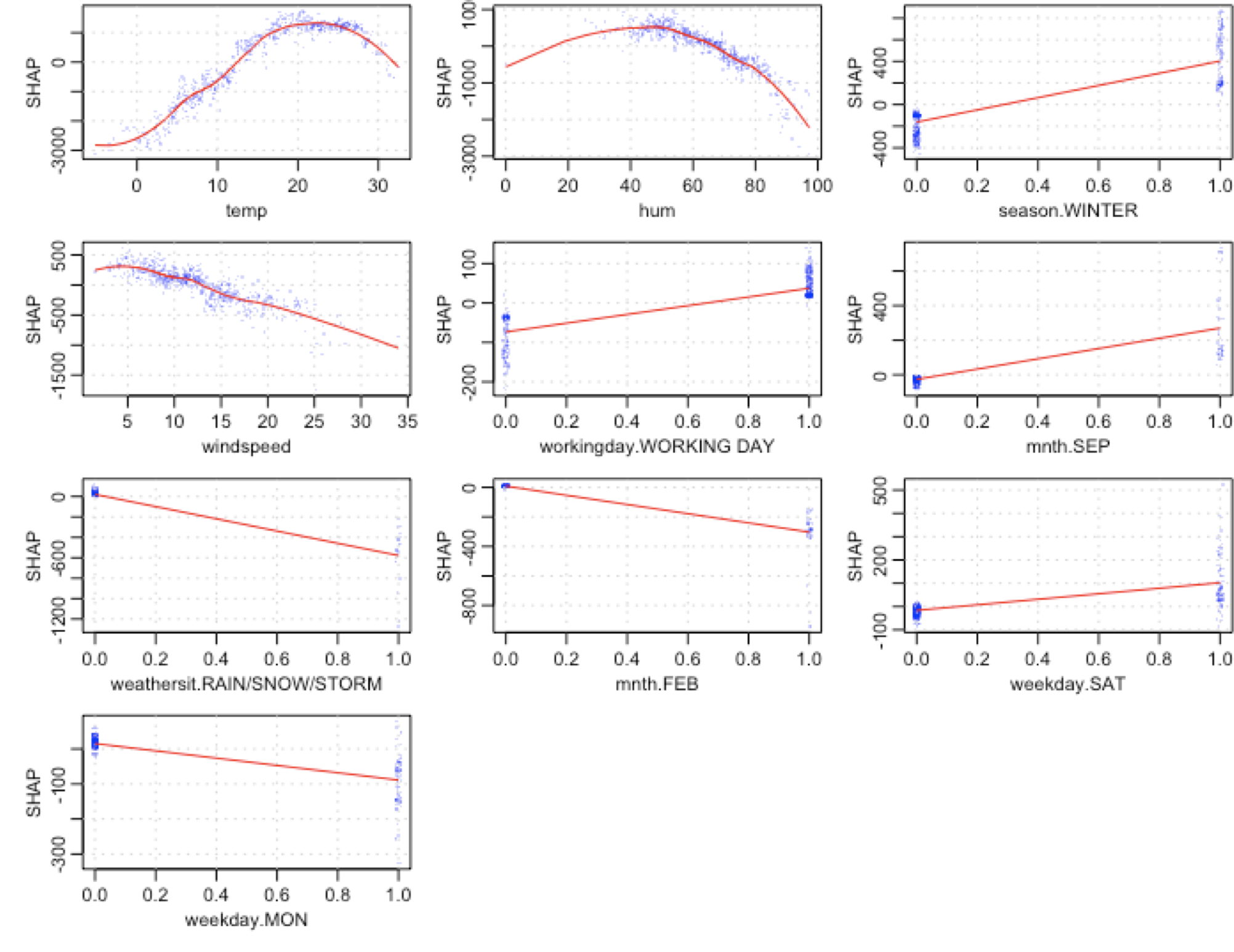

Function xgb.plot.shap from xgboost parcel provides these plots:

- y-axis: shap value.

- 10-centrality: original variable value.

Each blue dot is a row (a twenty-four hour period in this example).

Looking at temp variable, we tin run into how lower temperatures are associated with a big subtract in shap values. Interesting to note that around the value 22-23 the curve starts to decrease again. A perfect non-linear relationship.

Taking mnth.SEP nosotros can observe that dispersion effectually 0 is almost 0, while on the other hand, the value 1 is associated mainly with a shap increment around 200, just it too has certain days where it tin button the shap value to more 400.

mnth.SEP is a good case of interaction with other variables, since in presence of the same value (1), the shap value tin differ a lot. What are the effects with other variables that explain this variance in the output? A topic for another post.

R packages with SHAP

Interpretable Machine Learning by Christoph Molnar.

shapper

A Python wrapper:

xgboostExplainer

Altough it's not SHAP, the idea is really similar. It calculates the contribution for each value in every case, by accessing at the trees structure used in model.

Recommended literature about SHAP values 📚

At that place is a vast literature effectually this technique, check the online book Interpretable Machine Learning past Christoph Molnar. Information technology addresses in a nicely fashion Model-Agnostic Methods and one of its item cases Shapley values. An outstanding work.

From classical variable, ranking approaches like weight and gain, to shap values: Interpretable Auto Learning with XGBoost by Scott Lundberg.

A permutation perspective with examples: One Characteristic Attribution Method to (Supposedly) Rule Them All: Shapley Values.

--

If you have any questions, exit it below :)

Cheers for reading! 🚀

Other readings you lot might like:

- New discretization method: Recursive information gain ratio maximization

- Characteristic Choice using Genetic Algorithms in R

- 📗Information Science Live Book

Twitter and Linkedin.

Source: https://blog.datascienceheroes.com/how-to-interpret-shap-values-in-r/

0 Response to "How to Read Shap Plots in Binary Output"

Post a Comment